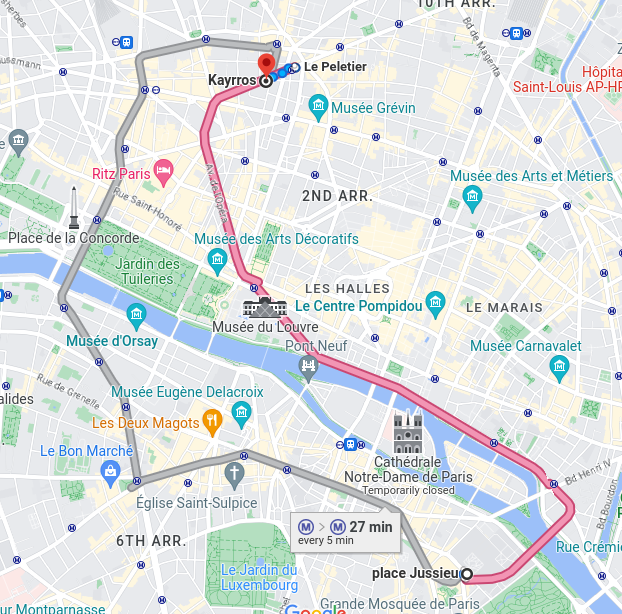

Room: 24-25 108 Campus Pierre et Marie Curie

Online: https://inria.webex.com/inria/j.php?MTID=mde59a1a59c9154e7c9aa68e57f692569

Abstract:

Artificial Intelligence promises to revolutionize how we understand complex systems like Earth’s climate. From predicting ocean currents to identifying patterns too complicated for the human eye, the promise of AI is incredible. However, validation remains a key concern.

In this talk, I explore how AI can advance our scientific enterprise in improving climate models and serving as a tool to gain new knowledge, when used carefully. Through examples from ocean and atmosphere dynamics, we will examine how machine learning can uncover governing equations, identify dominant processes, and reveal new patterns in ocean circulation. Using both supervised and unsupervised approaches, I’ll show how we can move beyond black-box predictions toward AI that learns in ways consistent with physics and meaningfully extends scientific understanding.

I have two goals: to highlight where AI can yield transformative insights for climate science, and to show how we can build the trust, transparency, and semantic grounding to distinguish genuine discoveries from illusions.

Biography:

Maike Sonnewald is an Assistant Professor in the Computer Science Department and a CAMPOS scholar. In 2016 Prof. Sonnewald received her PhD in Complex Systems Simulation through the National Oceanography Center at the University of Southampton, UK. Her doctoral work was followed by a postdoctoral position at the Department of Earth, Atmosphere and Planetary Sciences in the Massachusetts Institute of Technology. She then joined Princeton University as an Associate Research Scholar. She has held visiting positions at various institutions in the US and Europe. Current affiliations include Affiliate Assistant Prof at the University of Washington School of Oceanography, Affiliate Reseacher at NOAA-GFDL and Visiting Scientist at Princeton.